6-Step Process | Step 2 — Prepare Modeling Dataset (1/2)

Welcome back to a series of articles on promotion effectiveness measurement and promotion planning! In Part 2, I discussed Step 1 of the overall process: (1) build a data foundation. Today, I will continue to discuss the next step—Step 2: (2) prepare a modeling dataset.

In case you missed the previous articles, links to Part 1 and Part 2 of this series are shown below.

Step 2: Prepare a Modeling Dataset

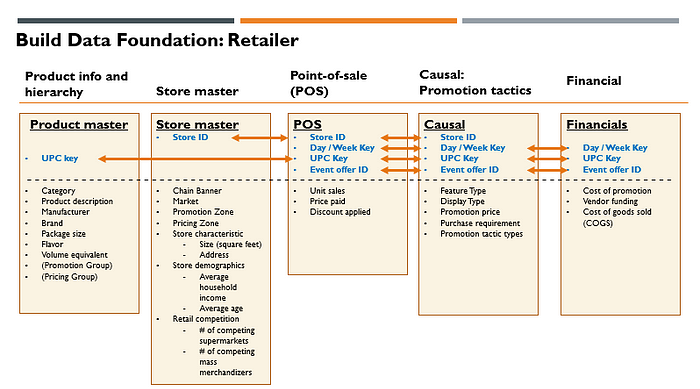

Foundational data collected in the central data repository for promotion effectiveness modeling are at a lower grain than the required modeling dataset. Thus, we need to properly aggregate the data at the level of modeling grain. This design: lower grain foundational datasets and a higher grain modeling dataset, is intentional for us to ensure flexibility in the choice of modeling grains and feature engineering in the modeling dataset preparation stage. It is always possible to aggregate the data, but we can’t disaggregate data once aggregation is conducted.

In addition to aggregation, we need to create new data columns (i.e., new features), which align with modeling specifications. Therefore, aggregation, filtering, and feature engineering are required to go from foundational data to the modeling dataset. This step involves (1) data quality checks such as outlier analyses and summary statistics, and visual inspection, (2) (store) clustering analyses if promotion zones or pricing zones were not defined before, (3) data filtering and aggregation, (4) feature engineering to create derived columns, and (5) post modeling dataset checks to ensure data aggregation and feature engineering are conducted properly: e.g., data join checks, summary statistics checks, and time trend checks. Before describing these in detail, I have summarized the design consideration for the modeling dataset

- Modeling grain (proper aggregation)

There are 3 primary dimensions that define the aggregation level of modeling grain. I have described recommended choices below.

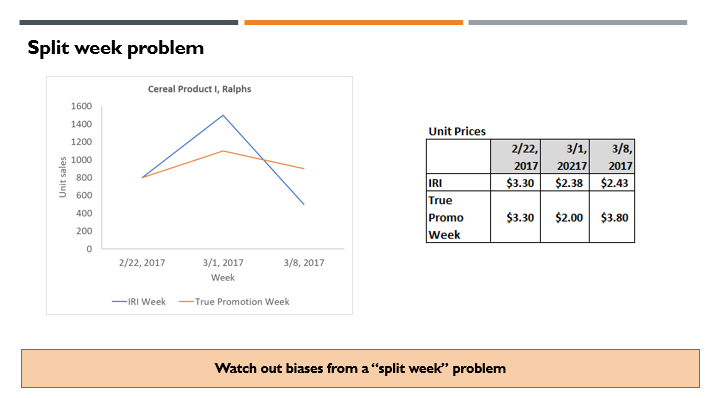

(1) Time — Day vs. Week: Weekly data are a very common choice in the industry, but I would recommend daily data. Weekly data would work fine and help with the modeling of low-sales velocity products. However, this can create “aggregation bias”, if you are not careful. This is more common for multi-market retailers or national manufacturers. Multi-market retailers (e.g., Kroger) typically grew with M&A and local chain banners tend to stick with different promotion calendars. When promotion calendar weeks (e.g., Wednesday to Tuesday) of certain local market chains are misaligned with a common database week (e.g., Sunday to Saturday), using the weekly POS data leads to biased estimates of promotion ROI and price elasticities (usually underestimated). This is visually demonstrated in Figure 1 by comparing typical IRI or AC Nielsen data with the split week problem with the ground-truth data (aggregated from another daily dataset with a proper promotion week definition.)

We call this data aggregation bias problem a “split week” problem since the real promotion week data are artificially split into two database weeks. Figure 1 above shows actual sanitized data in the cereal product category at Ralphs chain in Southern California. We compare IRI unit sales and price data (i.e., misaligned data) with promotion week aligned data (which is a proxy of properly aggregated data from Kroger: 84.51: We have created proxies for both by using household panel data for this example since Kroger 84.51 data are not accessible for research. However, this is based on multiple consulting project experiences.) With the blending of promotion week data with non-promotion week data, promotion peak volume is understated in IRI data. In contrast, off-promotion sales (a week following the promotion week) are overstated in IRI data. Net prices are overstated during the promotion week and understated in non-promotion week in IRI data. These patterns usually lead to underestimation of promotion ROI and downward biases in price elasticities. Even more, the net prices from IRI or AC Nielsen data do not correspond well to actual regular prices or promotion prices in the accounts with a “split week” problem, as shown in Figure 1.

Moreover, daily deals become more common recently, which results in a partial-week promotion. Daily data week will be more robust against aggregation biases from daily deals. If you work with a retailer or a manufacturer with a single promotion week definition without daily deals, weekly data would be appropriate as time grain.

(2) Product —Brand, UPC, vs. Brand-Pack: Most of the academic research on promotion modeling used brands as a level of analysis (Commercial Use of UPC Scanner Data: Industry and Academic Perspectives, Marketing Science, 1999). This is partly due to the fact that computing power was limited when promotion modeling was popular in Marketing Science in early 2000. However, the real product that you have to manage, and measure is at the UPC level. Thus, UPC is a more natural choice as a grain for the product. Moreover, you can find that price elasticities do change by different package sizes (e.g., 2L bottle vs. 12-ounce 12-pack cans) and flavors (e.g., Regular vs. Diet) within the same brand. For certain product categories, product line pricing is common, in which all UPCs within the same product line have the same regular prices and promotion prices. As long as prices and non-price marketing supports are common, it is fine to aggregate UPCs to a higher level. As an example, for a soft drink category, regular prices and promotional prices are maintained the same for all UPCs with different flavors (Coca-Cola Diet, Coca-Cola Regular) when the package size is the same. Brand/pack size aggregation can be proper in this case.

(3) Location — Store vs. Zone: The simplest choice here would be to use “store” as a grain since a single store should have the same promotion. This also gives you the flexibility to tailor promotions or pricing at the store level. On the flip side, the unit sales of the product at the store level are quite noisy (especially for tail UPCs with frequent zero sales per day or per week). Thus, in most cases, it is preferred to aggregate store-level data to higher geographical units as long as the promotion is homogeneous within the geographical units. Again, if you are not careful and aggregate stores with different promotion schedules, aggregation bias can result, which will distort promotion ROI measurements and price elasticity estimates (Using Market-level Data to Understand Promotion Effects in a Non-linear Model, Journal of Marketing Research, 1997) Most of the multi-market retailers assign promotion zones (and price zones) to stores. Within the same promotion zones, promotion prices are the same. Similarly, within the same price zones, regular prices are the same. Pricing departments (in charge of regular prices) are commonly separate from promotion departments (in charge of promotion prices), thus it is possible to observe differences in pricing zones and promotion zones. Ideally, a common zone both for regular prices/promotional prices are preferred, but you can differences from time to time. Thus, you can either use (1) promotion zone as modeling grain (if pricing/promotion zones are the same), or (2) pricing/promotion zone combination as modeling grain (if pricing zones are different from promotion zones.) This will ensure that you can avoid “aggregation” biases and make data more robust since random noises at store/product level will cancel out with the higher-level aggregation. After data aggregation is done, it is important to compare overall summary statistics (e.g., # of unique days, # of unique products, total revenue, total unit sales) before and after aggregation to ensure that there are no significant data loss or SQL coding errors. It also helps to plot time series to make sure that there are no irregular anomalies in the data. I will make this more concrete with actual POS data and code examples in the next article.

2. Filtering of data

For business datasets such as POS data, it is quite common to have outliers. Thus, outlier detection (based on typical anomaly detection algorithms with pre-specified thresholds) needs to be carried out to filter out outliers, which are most likely to be based on incorrect data entries. Some UPCs are failed products with almost no real sales. These UPCs add noise and are practically irrelevant to the business. Thus, it is common to apply unit sales thresholds to filter out UPCs with very low levels of sales. There can be only 1 or 2 inventories of certain UPCs from the previous season, which again is practically irrelevant. You can filter these UPCs out as well by checking # of weeks with unit sales. After filtering with several criteria, you can check what % of revenue or (equivalent) unit sales are maintained to ensure that you are not filtering data too much. Usually, 95% — 99% of revenue will be maintained after proper data filtering. In the next article, I will demonstrate filtering with actual POS data and Python code samples.

3. Feature engineering (derived columns)

In addition to aggregation and filtering, a major task in modeling dataset generation is creating derived feature columns, which are mapped to the causal demand model specification. Shown below are typical columns that you need to create for the simplest model as a toy example.

(1) Primary keys: UPC, Day, Promotion Zone ID

(2) Volume (in equivalent units): Unit sales x volume equivalent

(3) Own price: Unit price for the focal UPC

(4) Promotion tactics: Initially categorical variables (Must Buy 2, Buy 1 Get 1 Free, % Discount, Absolute Dollar Discount, etc.) This will be one hot-encoded to create separate dummy variables for each promotion tactic type.

(5) Non-price promotion: Feature indicator (0/1), Display level (High/Medium/Low), Coupon indicator (0/1). A binary indicator is the simplest. If more nuanced measurements are desired, you can separate the front page of features from the inside and back pages. Cases on the display (i.e., continuous variable) can be used instead of display levels. It is well known that a front-page feature on Sunday is more effective, especially with large product advertising sizes. However, this will cost more.

(6) Competing product prices: Ideally, you should figure out what sets of products are considered similar and put the prices of these products, which will change by focal UPC. However, this is difficult unless you have other datasets, which provide information for product similarity. Product similarity data can come from product switching data from loyalty cards for retailers, household panel datasets (e.g., Nielsen Homescan), Amazon search data, or text mining of product descriptions. Since own price elasticity (on average -2.5) is an order of magnitude large than cross-price elasticities (impact from the price changes of competing products, 0.1 ~ 0.3), it is fine to only control competing prices in an aggregate price index for initial promotion modeling journey. The aggregate competing price index can be computed by calculating (the revenue of products in the same subcategory) / (unit sales of products in the same subcategory) after excluding the focal product. Using constant weights is more robust, but this simple weighting works fine if you want to keep things simple. For an application at scale, a more rigorous way of computation sometimes can be intentionally avoided if the impact on the final results is small and computing costs are large (i.e., engineering decision.) However, this does require careful testing to ensure that potential loss of robustness is small and manageable.

(7) Weekly dummies: To properly control weekly seasonality, we can also include one-hot encoded weekly dummy variables. Specifying this at the category (or sub-category level) balances # of variables with modeling flexibility

(8) Weekday indicators: If you are using daily data, it is a good idea to include weekday indicators (e.g., Monday, Tuesday, and Sunday) to capture different shopping patterns across weekdays.

(9) Trend variable: It is fairly common to have underlying trends. The simplest thing you can do is to include the week number variable and its polynomials (e.g., week number, week number squared.) It is also possible to apply the LOESS procedure or Holt-Winters procedure (i.e., exponential smoothing) to decompose seasonality and trends after price control as pre-processing.

(10) Cost: To calculate promotion ROI, the cost of the product (i.e., wholesale prices for retailers, or COGS for manufacturers) and the cost of promotions should be included in the modeling dataset.

(11) Log transformation: The simplest work-horse model that works well in practice is a mixed effect log-log demand model. This model uses log(unit sales in volume equivalent) as the dependent variable and log(unit price) and price variables. Thus, you need to apply log transformation to price and unit sales variables.)

(12) (Optional) product attributes: If you have access to product attribute data (e.g., product stub file from IRI) with clear ideas on what product attributes matter, you can also include product attributes. This will allow you to make predictions for new products, which were never sold before as long as the new product can be expressed as a combination of existing product attributes. Including product attributes will also give you an idea of what product attributes are more popular vs. less popular.

4. What to watch out

(1) Check price variation: One of the common mistakes is equating the size of data records with the richness of the dataset. For price and promotion modeling, what matters more is whether you have good price variation over time (within the same UPC/store) for a large % of products. In the example in Figure 2, Walmart has a larger dataset, but the Best-buy dataset would be more useful due to frequent price variation. One useful summary statistic that you can calculate is the coefficient of variation of prices (i.e., relative standard deviation: mean / standard deviation X 100%). In one of the extreme examples, out of 15,000 products for dollar stores, only 300 products had a meaningful variation of prices to be useful for price modeling in one of my consulting projects.

(2) Ensure enough history: Seasonality and trends are very common. To properly control them, you need to have at least 2–3 years of data. Covid caused a structural break, which makes the identification of seasonality harder with the recent data.

(3) Aggregation bias: In the discussion above on modeling grain, you may have noticed that the central theme is avoiding “aggregation” bias. It is okay to aggregate as long as promotion conditions are homogeneous. However, if you start to aggregate data with different promotion conditions, you are starting to induce biases (usually along the direction of under-estimating price elasticities.)

(4) Cross-section only: There can be a request to use only cross-sectional data (multiple geographic units/products with a single time period) to conduct causal promotion demand modeling. This will be very difficult, which requires special modeling techniques (e.g., spatial econometrics) and strong assumptions. Most useful (causal) price variations in the data are from within product/store across time variations. Without multiple price variations with enough histories, it is not possible to measure promotion ROI or price elasticities properly.

(5) Dependency with modeling choice: There is a very strong dependency between model specification and modeling dataset creation. Therefore, it is very important to be clear about what model specifications you will try and be more inclusive about potential feature columns to create. Without proper planning, you may have to go back to modeling dataset creation and redo the work. This can be even more painful if you have to do data fusion and combine datasets with different levels of data (e.g. product similarity from loyalty card data + store-level POS data.)

In the next article, I will make these ideas more concrete by utilizing public POS data (Dominick’s dataset, Kilts Center of Marketing, University of Chicago) and SQL / Python code samples.

Note

Promotion modeling was considered a largely solved problem in academia around 1999. Academic research on promotion has been less active recently except for changing the causal demand modeling method from a log-log model to the aggregate discrete choice model. In contrast, large-scale adoption of promotion ROI measurement and promotion modeling as a solution only started around 2014 by major consulting firms in the United States. These models were implemented before, but those were limited in scope: only by a handful of retailers or manufacturers (e.g., CVS, Proctor & Gamble.) This is a very common pattern for the adoption of new methods from academia to the industry with computing power increase and more data education (lagged by 10–20 years.) The reality is that promotion modeling is far from a solved problem if you try to implement this on a large scale. The connection of causal ML demand modeling with promotion optimization is not studied extensively both in academia and practice.

Reference

- 8451: First-party retail data platform provided by Kroger. Includes store-level POS dataset and aggregate summary from loyalty cards, https://www.8451.com/

- Randolph E. Bucklin and Sunil Gupta, Commercial Use of UPC Scanner Data: Industry and Academic Perspectives, Marketing Science, 1999

- Markus Christen, Sachin Gupta, John C. Porter, Richard Staelin and Dick R. Wittink, Using Market-level Data to Understand Promotion Effects in a Non-linear Model, Journal of Marketing Research, 1997

- Dominick’s Dataset, Kilts Marketing Center, University of Chicago